PIC computation time benchmarks

Overview

The goal of this task is to determine the speed at which the PIC 18F4520 (with 20MHz clock) makes certain calculations. There are many different ways to calculate common functions, such as Taylor expansion versus using the sine command. Some of these methods are faster than others, which could effect your program. When a program is dependent on time, such as while using an interrupt service routine for dead reckoning, a slower calculation might not complete within the ISR. Therefore, it is important to use the most efficient method for simple math and trigonometric functions. The following is empirical data of the speed to which certain data types conduct functions.

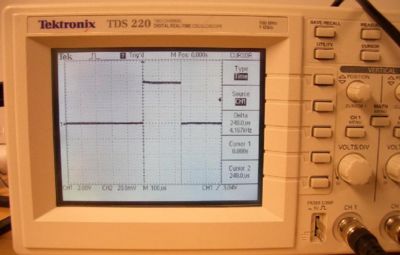

The process involved programming the function onto a PIC from a PC and using an oscilloscope to measure the time it took to complete. First, we checked to see if our procedure was free of errors. This was done by programming in a simple delay (e.g., 10μs to 50ms) and measuring its accuracy using the cursor feature on the oscilloscope. A digital output was used. The program would output high, run the delay, output low and run another delay. This would effectively display a square wave, where each delay should represent either the logic low or high on the scope. We found no error in our procedure. We then replaced a delay with a function (e.g., 2 + 5). Eventually we changed the code to conduct the function ten times consecutively. This was done to make oscilloscope measurement easier. The number of iterations of the function corresponded linearly with the time, so the time it took to do one function equated to ten times longer for ten functions, or one hundred times longer for one hundred functions. Our findings are summarized below.

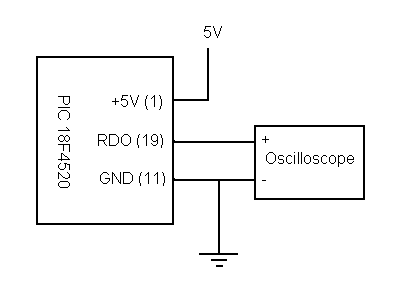

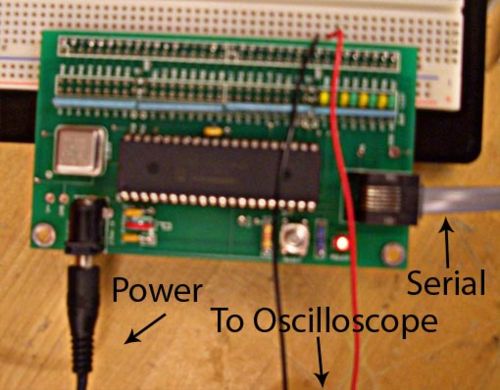

Circuit

The circuit to conduct this test required the PIC 18F4520, power source and an oscilloscope hooked up to a digital output & ground. In the example below the wall power source was used, but a battery could be interchanged. The serial connection was used to update the PIC with each new test written with PIC-C on a nearby PC.

An example of an oscilloscope reading can be seen below right. Test readings were accurate with 1, 10 and 100 iteration tests. The cursor function was used to measure the duration of the high output.

Code

#include <18f4520.h>

#include <math.h>

#fuses HS,NOLVP,NOWDT

#use delay (clock=20000000)

#define LED_0 PIN_D0

void main(void) {

float z;

float x=0;

float y;

float xsquared, xcubed, xfifth, xseventh, xninth;

while(TRUE){

output_high(LED_0); // Do not use loop for the calculations because it will take time to iterate

//ninth order Taylor series (sine). Multiply by 1/n! instead of dividing by n! (multiplication is faster)

xsquared = x*x; //use these variables to keep calculation time down (instead of x*x*x*x*x....)

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333) - (xseventh*.0001984127) + (xninth*.00000275573);

xsquared = x*x;

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333) - (xseventh*.0001984127) + (xninth*.00000275573);

xsquared = x*x;

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333) - (xseventh*.0001984127) + (xninth*.00000275573);

xsquared = x*x;

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333) - (xseventh*.0001984127) + (xninth*.00000275573);

xsquared = x*x;

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333) - (xseventh*.0001984127) + (xninth*.00000275573);

xsquared = x*x;

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333) - (xseventh*.0001984127) + (xninth*.00000275573);

xsquared = x*x;

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333) - (xseventh*.0001984127) + (xninth*.00000275573);

xsquared = x*x;

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333) - (xseventh*.0001984127) + (xninth*.00000275573);

xsquared = x*x;

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333) - (xseventh*.0001984127) + (xninth*.00000275573);

xsquared = x*x;

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333) - (xseventh*.0001984127) + (xninth*.00000275573);

/*

z = x + y; // code for simple math, change operations and variable types

z = x + y;

z = x + y;

z = x + y;

z = x + y;

z = x + y;

z = x + y;

z = x + y;

z = x + y;

z = x + y;

*/

output_low(LED_0);

//seventh order Taylors series (sine)

xsquared = x*x;

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333);

xsquared = x*x;

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333);

xsquared = x*x;

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333);

xsquared = x*x;

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333);

xsquared = x*x;

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333);

xsquared = x*x;

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333);

xsquared = x*x;

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333);

xsquared = x*x;

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333);

xsquared = x*x;

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333);

xsquared = x*x;

xcubed=xsquared*x;

xfifth=xcubed*xsquared;

xseventh=xfifth*xsquared;

xninth=xseventh*xsquared;

z = x - (xcubed*0.1666666) + (xfifth*.008333333);

/*

z = sqrt(x); //square root test

z = sqrt(x);

z = sqrt(x);

z = sqrt(x);

z = sqrt(x);

z = sqrt(x);

z = sqrt(x);

z = sqrt(x);

z = sqrt(x);

z = sqrt(x);

*/

}

}

Results

Simple Math Functions

The below table contains the results from our operation testing. All the values are given in μs and the value in parentheses is the time normalized to the quickest operation in the entire table.

|

Function |

Int8 |

Int16 |

Int32 |

Signed Int8 |

Signed Int16 |

Signed Int32 |

Float |

|

Addition (+) |

0.64μs (1.00) |

1.24μs (1.94) |

2.44μs (3.81) |

0.64μs (1.00) |

1.24μs (1.94) |

2.44μs (3.81) |

25.6μs (40.00) |

|

Subtraction (-) |

0.68μs (1.06) |

1.28μs (2.00) |

2.48μs (3.88) |

0.68μs (1.06) |

1.28μs (2.00) |

2.48μs (3.88) |

29.8μs (46.56)* |

|

Multiplication (*) |

0.8μs (1.25) |

6.0μs (9.38) |

45.0μs (70.31) |

0.92μs (1.44) |

6.0μs (9.38) |

46.0μs (71.88) |

26.0μs-28.0μs (40.63-43.75) |

|

Division (/) |

19.4μs (30.31) |

60.8μs (95.00) |

195.0μs (304.69)* |

22.4μs (35.00) |

64.4μs (100.63) |

201.0μs (314.06)* |

176.0μs-226.0μs (275.00-353.13) |

|

And (&) |

0.64μs (1.00) |

1.24μs (1.94) |

2.44μs (3.81) |

|

|

|

|

|

Or ( | ) |

0.68μs (1.06) |

1.28μs (2.00) |

2.48μs (3.88) |

|

|

|

|

|

Shift (>>1) |

0.64μs (1.00) |

1.04μs (1.63) |

1.84μs (2.88) |

|

|

|

|

|

Shift (>>2) |

1.04μs (1.63) |

1.64μs (2.56) |

2.84μs (4.44) |

|

|

|

|

|

Shift (>>3) |

1.24μs (1.94) |

2.04μs (3.19) |

3.64μs (5.69) |

|

|

|

|

|

Shift (<<1) |

0.68μs (1.06) |

1.08μs (1.69) |

1.88μs (2.94) |

|

|

|

|

|

Shift (<<2) |

1.08μs (1.69) |

1.68μs (2.63) |

2.88μs (4.50) |

|

|

|

|

|

Shift (<<3) |

1.28μs (2.00) |

2.08μs (3.25) |

3.68μs (5.75) |

|

|

|

|

Table 1.1 Simple Math Functions ( * Indicates an averaged field)

As you can see, there are clear advantages to using smaller data types. There are even some operations that take slower with signed data types versus unsigned. Most all of the data was constant for small and large values for these simple math operations. For float data types, differing values tended to vary in time. Variability was also seen in some int32 calculations. These results are either given as an experimental range (if significant variability) or averaged (less than 4% variability).

Chapter 8 of the PIC manual lists the computation time for several multiply operations. Table 1.2 shows the values from the PIC manual compared to our measurements. As you can see, the calculation times are within an order of magnitude from each other. One difference is that in our measurements signed and unsigned multiplication took nearly the same amount of time. This was unexpected as the algorithm for signed multiplication (as outlined in the PIC manual) should take longer than unsigned multiplication.

|

Routine |

Time at 20 MHz clock speed* |

Measured Values |

|

int8 x int8 (unsigned) |

0.2 us |

0.8 us |

|

int8 x int8 (signed) |

1.2 us |

0.92 us |

|

int16 x int16 (unsigned) |

5.6 us |

6.0 us |

|

int16 x int16 (signed) |

8.0 us |

6.0 us |

Table 1.2 Multiplication times compared to PIC manual values (* Times derived from Table 8-1 of PIC manual)

Multiplication, Division & Shifts

For most integer types shifting is much quicker than multiplication and division. The exception is shifting more than one place for Int8 will take slightly longer. Therefore, if you are multiplying by 2# (where # is any positive integer value) it is faster to use a shift. The same is true if you are dividing by any factor of 2#. In this case shifting to the right divides and vice versa for multiplication.

Division is much slower than multiplication across all data types. If you are computing a float it is much faster to multiply by a reciprocal. Below is a table of two functions that compute the same value but at very different speeds.

|

Function |

Time |

|

2 * 0.1 |

28μs (1.00) |

|

2 / 10 |

200μs (7.14) |

|

2* 0.001 |

28μs (1.00) |

|

2 / 1000 |

220μs (7.86) |

Trigonometric Functions & Square Root

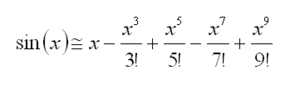

Table 1.3 shows the results from the trigonometric, square root, and Taylor Expansion tests. The Taylor expansion example below is a ninth order. To conduct seventh or fifth order expansions one just drops out the higher terms. Sine and cosine functions take about the same time with tangent being slightly slower. Also, the square root function was slightly slower than the sine function. Note that the fast cosine value (0.24 ms) was for cos(0). Other than this value the time range closely matches that for sine. As shown the Taylor series approximation for sine is faster than using the sine command. It is important to note from our code that we multiplied by a reciprocal. Computing a Taylor series with division takes just a long as using the sine function. As expected, a higher order approximation will take more time. Depending on the amount of accuracy you need, using the Taylor approximation will save a significant amount of time.

|

Function |

Time Range (normalized) |

Error |

|

Sin |

0.27-1.20 ms (3.80-16.9) |

0 |

|

Sin (Taylor-9th order) |

0.106-0.380 ms (1.49-5.35) |

0.006925 |

|

Sin (Taylor-7th order) |

0.089-0.312 ms (1.25-4.39) |

0.07522 |

|

Sin (Taylor-5th order) |

0.071-0.252 ms (1.00-3.55) |

0.5240 |

|

Cos |

0.24-1.14 ms (3.38-16.1) |

n/a |

|

Tan |

1.37-2.52 ms (19.3-35.5) |

n/a |

|

Sqrt |

0.98-1.26 ms (13.8-17.7) |

n/a |

Table 1.3 Trigonometric Functions and Square Root (all measurements are for float variables)

Algorithms for Square Root and Trigonometric Functions

There are several algorithms that can be used to compute the square root. The basic steps are:

- 1. Start with an initial guess, x0.

- 2. Multiply x0 by itself (or perform a different calculation).

- 3. See how close this solution is to the argument.

- 4. Change x0 and iterate until you are close enough to the argument.

One such algorithm is the Babylonian method. The way the PIC chooses the initial value, x0, can affect the speed of the calculation. One way of choosing x0 is to set it equal to with D equal to the number of binary digits in the argument. The closer x0 is to the actual answer the faster the computation will be. This is why there is a range for computation times because the initial value the PIC chooses will affect the algorithm speed. The algorithm also conducted an initial test of validity. Values less than zero were immediately rejected, because this algorithm does not compute imaginary numbers. We also noticed that very large numbers (we tried 1017) were also rejected. This initial validity test also contributes to slower calculation speeds.

One method that the PIC can use to calculate trigonometric functions is the CORDIC algorithm. CORDIC is a type of shift-and-add algorithm that rotates a vector. An initial vector, v0, is set and then multiplied by a rotation matrix. The rotation of the vector is repeated until its angle is close to the desired angle, . The sine of is the vector's projection onto the y-axis, while the cosine is the projection onto the x-axis. The number of iterations that the algorithm has to make depends on the angle, . Thus, the PIC's computation time for trigonometric functions will vary depending on the angle.